AI-Driven Inventory Optimization: $100M+ Immediate Savings

Leveraging AI and cross-functional leadership to save $100M+ in a single quarter through memory re-qualification

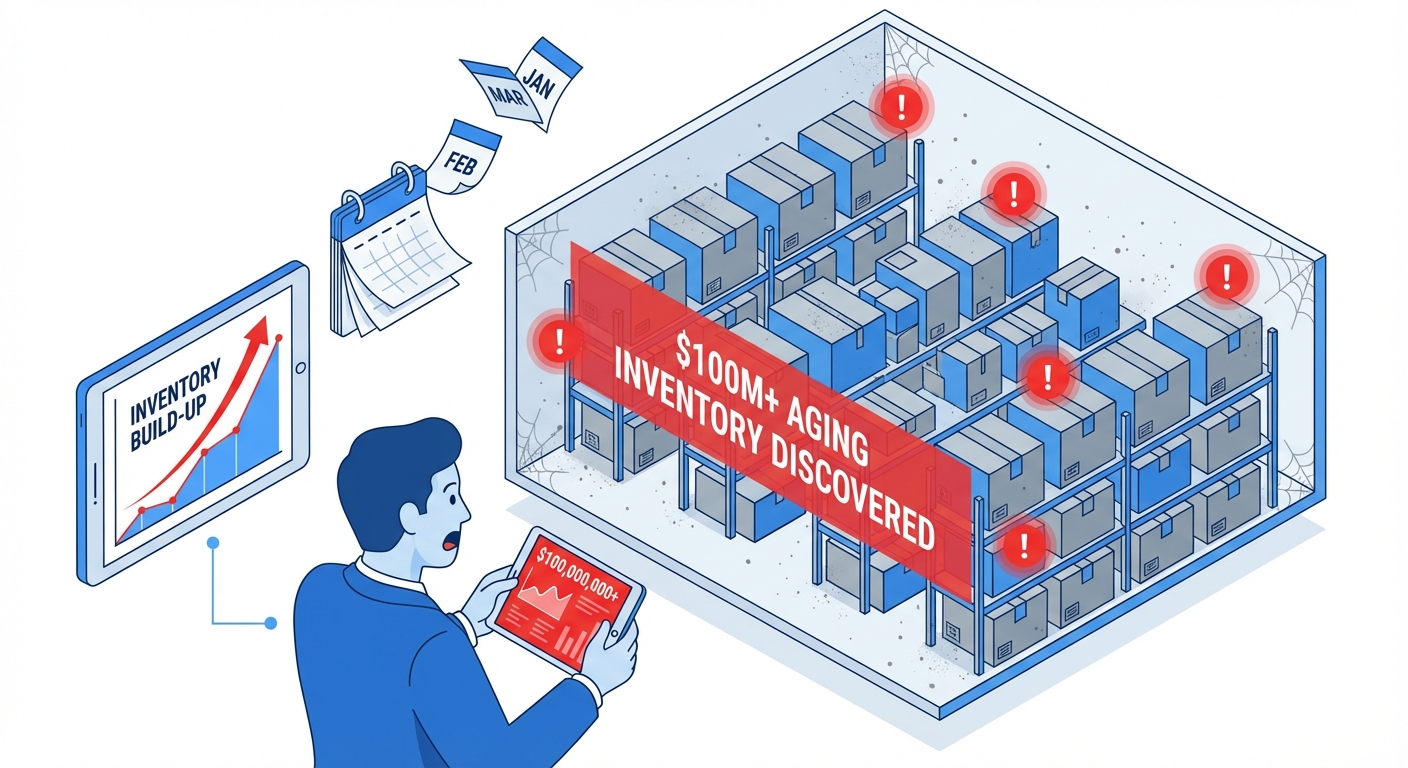

The Challenge

Through systematic analysis, I personally built an analytical pipeline including inventory data and data center build plan supply-demand matching. This revealed a $100M+ inventory build-up that required immediate action.

The technical reality: Data center build plans and bills of material had shifted in such a way that created stranded inventory. Specifically, the memory type in our inventory had been qualified for some server builds, but not for the servers in the latest build-plan—even though the memory type was nearly identical.

The organizational challenge: I discovered that the key stakeholders—capacity planners, commodity managers, finance, and data-center engineering—weren’t actively connecting at the time. Each team held part of the puzzle, but no one saw the complete picture.

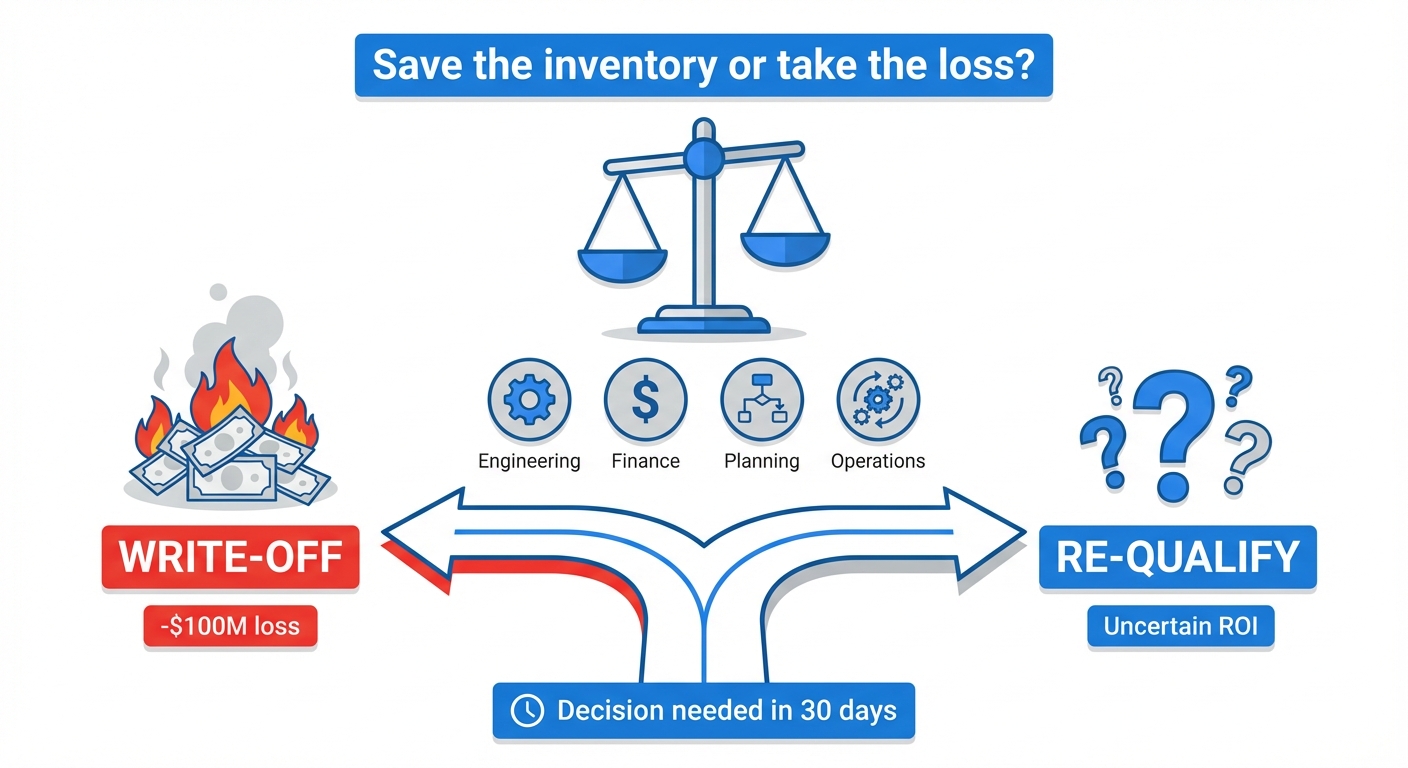

The decision facing leadership: write off $100M+ of inventory (massive immediate loss) or invest in re-qualification (uncertain ROI). Either path had significant financial implications, and the organization needed certainty.

My Approach: A Four-Step Framework

This was classic zero-to-one problem solving—no playbook existed for this situation. I personally drove each step of the solution:

The Analysis: Built Pipeline to Identify $100M+ Memory Inventory Crisis

I personally built an analytical pipeline that connected inventory data with data center build plans and supply-demand matching. This revealed that shifts in bills of material had created $100M+ of stranded memory inventory—memory that had been qualified for older server builds but not the new ones.

The Root Cause: United Disconnected Stakeholders to Find the "Why"

I brought together the key stakeholders who weren't actively connecting at the time—capacity planners, commodity managers, finance, and data-center engineering. Through cross-functional detective work, we discovered the **memory type qualification issue**: the memory in inventory was nearly identical to what was needed but hadn't been certified for new server builds.

The Business Case: Proved Re-qualification ROI Through Stakeholder Interviews

I interviewed stakeholders and ran an ROI analysis proving that the cost to re-qualify the memory for new servers was a **tiny fraction of the loss we'd take from a write-off**. The business case was clear: invest weeks in re-qualification to save $100M+. Leadership approved immediately.

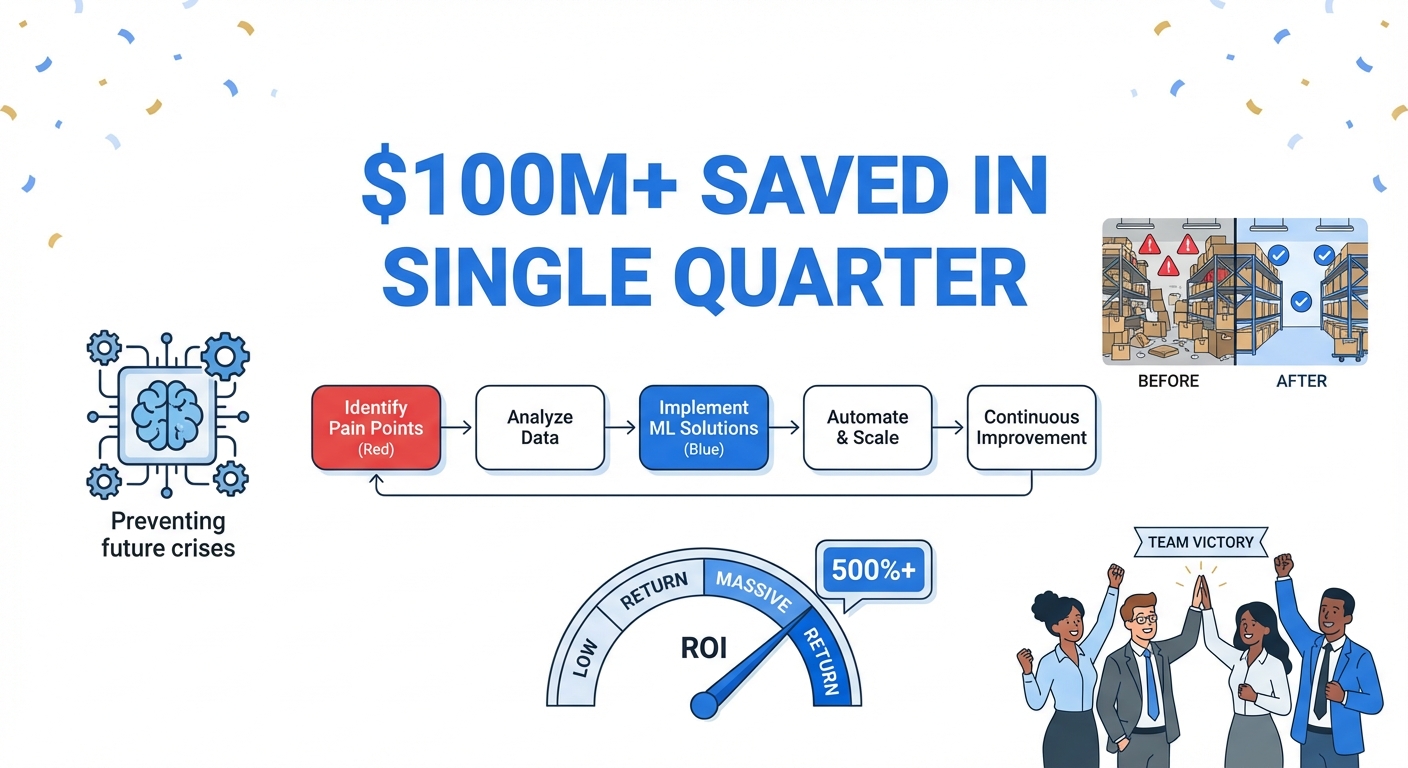

The AI Solution: Built ML Algorithm for Ongoing Prevention

We didn't stop at the one-time fix—we built on this success to justify engagement with Data Science, and together worked on an **ML algorithm** that could predict and prevent similar issues. The model automated identification of qualification mismatches before they became crises, transforming reactive firefighting into proactive optimization.

The Outcome

Saved in a single quarter through memory re-qualification and ML-based prevention

The solution not only resolved the immediate inventory challenge but created a repeatable framework for ongoing asset optimization and prevention.

This project demonstrated the power of combining analytical rigor with AI/ML capabilities—not just solving the immediate problem, but building systems that prevent it from recurring.