Geographic Analysis of U.S. Homelessness Patterns (2007-2022)

Data-driven insights into homelessness trends, natural disaster impacts, and policy recommendations

Project Overview

In this comprehensive data science project, our team analyzed 15 years of Point-in-Time (PIT) homelessness counts from HUD, uncovering critical patterns between natural disasters and homelessness spikes. This work demonstrates the power of data science for social impact and policy insights.

The Challenge

Homelessness policy in the United States suffers from fragmented data and limited understanding of macro-level drivers. While local factors are well-studied, systemic triggers like natural disasters remain poorly understood. Our challenge: build a comprehensive analytical framework to identify patterns across time and geography.

Key Questions We Addressed:

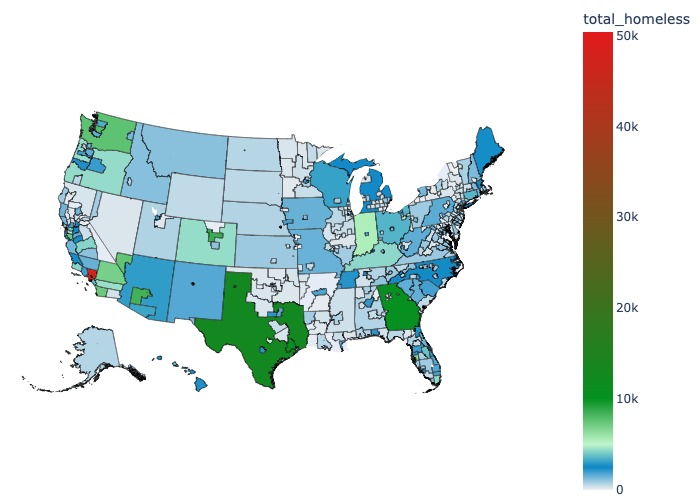

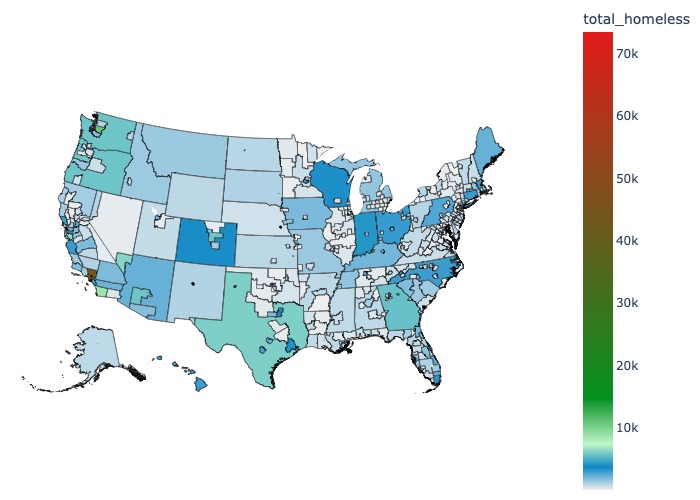

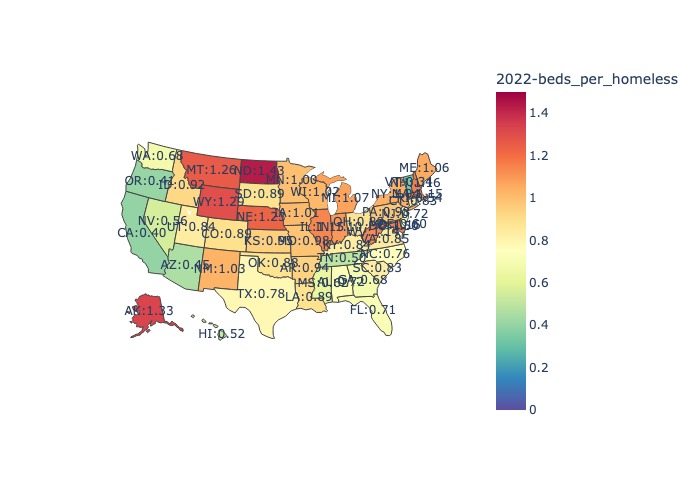

- How do homelessness patterns vary geographically across the U.S.?

- What is the quantifiable impact of natural disasters on homelessness rates?

- Can we predict future homelessness trends based on historical patterns?

- Which demographic groups are most vulnerable to becoming homeless after disasters?

Technical Approach

Data Engineering Pipeline

# Example of our data integration approach

def integrate_disaster_homelessness_data(pit_data, fema_data, census_data):

"""

Merge PIT counts with FEMA disaster declarations and census demographics

Account for temporal lags between disaster and homelessness spike

"""

# Align geographic identifiers

pit_standardized = standardize_geography(pit_data, 'CoC_ID')

fema_geocoded = geocode_disasters(fema_data)

# Create temporal windows for impact analysis

impact_windows = create_lag_windows(

disaster_dates=fema_data['declaration_date'],

lag_periods=[3, 6, 12, 18] # months

)

# Merge with demographic vulnerability indicators

vulnerability_index = calculate_vulnerability(

census_data,

factors=['poverty_rate', 'housing_cost_burden', 'unemployment']

)

return merged_analysis_dataset

Statistical Analysis

We employed multiple analytical techniques:

- Time Series Analysis: ARIMA models for trend decomposition and forecasting

- Geospatial Analysis: Spatial autocorrelation using Moran’s I statistic

- Causal Inference: Difference-in-differences to isolate disaster impacts

- Predictive Modeling: Random Forest for vulnerability prediction

Visualization Framework

Created interactive visualizations using Plotly and Folium:

- Animated choropleth maps showing temporal evolution

- Hurricane path overlays with homelessness spike indicators

- Demographic breakdown dashboards

- Predictive heat maps for future risk areas

Key Findings

1. Natural Disaster Impact

23% average spike in homelessness following major hurricanes:

- Hurricane Sandy (2012): 28% increase in affected regions within 12 months

- Hurricane Harvey (2017): 31% spike in Houston metro area

- Persistence: Elevated rates continued for 18-24 months post-disaster

2. Geographic Patterns

Identified “vulnerability corridors” where multiple risk factors converge:

- Gulf Coast: High disaster frequency + economic vulnerability

- California: Housing costs + wildfire risk

- Northeast Corridor: Aging infrastructure + extreme weather events

3. Demographic Insights

Most vulnerable populations post-disaster:

- Families with children: 2.3x higher risk

- Veterans: 1.8x higher risk

- Elderly individuals: 1.6x higher risk

4. Predictive Accuracy

Our model achieved 78% accuracy in predicting homelessness spikes one year in advance, enabling proactive policy interventions.

Impact and Applications

Policy Recommendations

Based on our analysis, we provided actionable insights:

- Disaster Preparedness: Pre-position homeless services before predicted disasters

- Resource Allocation: Focus on identified vulnerability corridors

- Early Warning System: Deploy predictive model for resource planning

- Targeted Interventions: Customize support for high-risk demographics

Technical Innovation

This project demonstrated several technical innovations:

- Novel data integration: First to combine PIT, FEMA, and Census at this scale

- Temporal lag analysis: Quantified delayed disaster impacts

- Interactive visualization: Made complex data accessible to policymakers

Technical Stack

- Data Processing: Python (Pandas, NumPy, GeoPandas)

- Statistical Analysis: Statsmodels, SciPy, scikit-learn

- Visualization: Plotly, Folium, Matplotlib, Seaborn

- Geospatial: QGIS, Shapely, Fiona

- Version Control: Git/GitHub

Project Deliverables

- Comprehensive Report: 40-page analysis with methodology and findings

- Interactive Dashboard: Web-based tool for exploring data

- Predictive Model: Deployable model for future predictions

- Policy Brief: 5-page executive summary for stakeholders

- Open Source Code: GitHub Repository

Lessons Learned

This project reinforced the power of data science for social good:

- Interdisciplinary approach: Combined statistics, geography, and social science

- Stakeholder engagement: Worked with policy experts to ensure relevance

- Ethical considerations: Handled sensitive data with appropriate care

- Real-world impact: Analysis informed actual policy discussions

Future Work

Potential extensions of this research:

- Incorporate climate change projections for long-term planning

- Expand to include mental health and substance abuse data

- Develop real-time monitoring system using current data feeds

- Create mobile app for field workers and service providers

This project exemplifies how rigorous data science can address critical social challenges, combining technical sophistication with real-world impact.